When Tokens Become OKRs

Why agentic coding mandates measure consumption, not product — and what to track instead

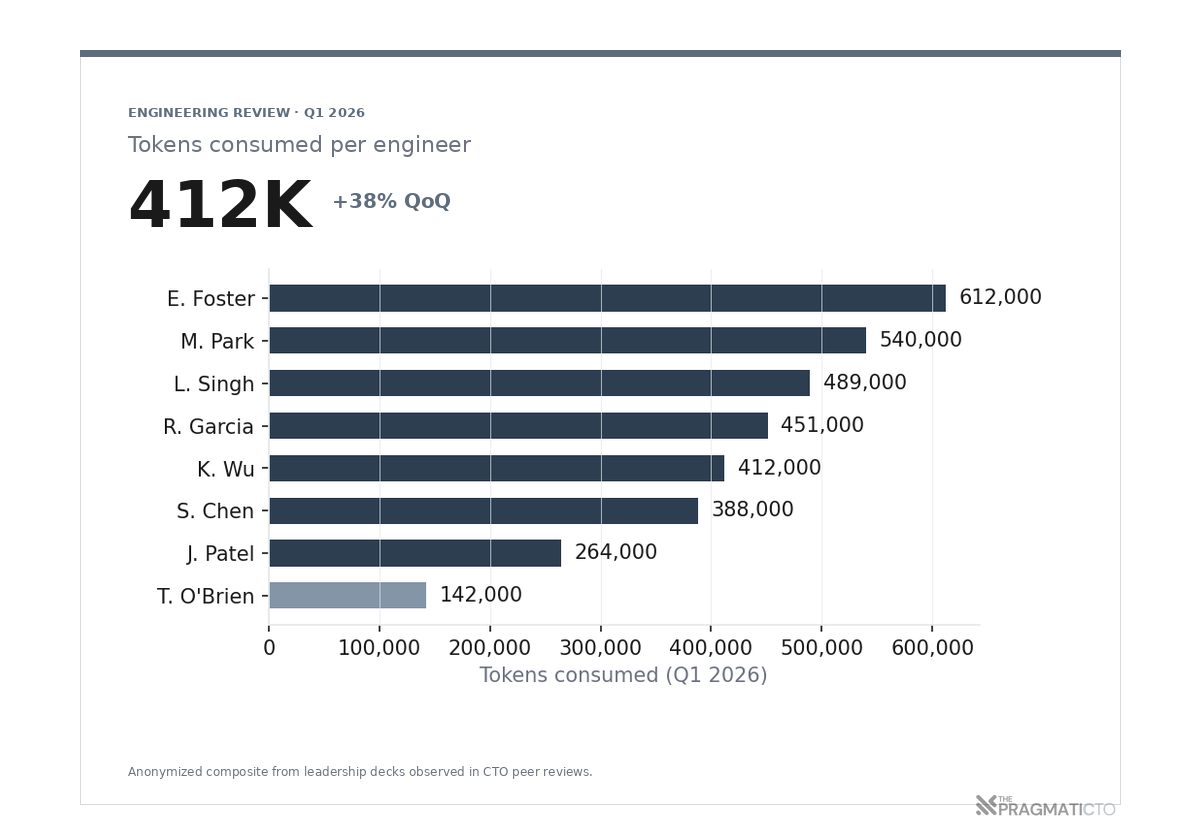

The Boardroom Slide

Slides like this one are becoming commonplace in the boardroom. It is not satire, many organizations are chasing metrics over substance. In what I think is a futile attempt to quantify the impact of AI; and justify what can only be described as AI FOMO (Fear of Missing Out), CTOs are mandating agentic coding adoption, tracking lines of code, and now tracking token usage as engineering KPIs.

The reaction in the room is always the same. Someone leans forward, points at the bar at the bottom of the chart, and asks why that engineer's number is so low. Someone else asks how we get the team average up next quarter. The conversation that follows is about goals, targets, individual development plans for low consumers. Nobody in the room is asking what the engineers built with those tokens.

Twelve months ago, the conversation was about whether engineers would use AI tools at all. In April 2025, Tobi Lütke told Shopify that "reflexive AI usage is now a baseline expectation," and that teams would have to demonstrate why a job could not be done with AI before requesting headcount.

Tobi is not alone in this and many others have followed suit. In May 2025, Klarna CEO Sebastian Siemiatkowski told investors that "AI can already do all our jobs," and that "cost unfortunately seems to have been a too predominant evaluation factor… what you end up having is lower quality." Another CEO with a 1500-person company told the team "you have geniuses in your pocket, stop thinking and use AI".

It's 2026, and all these AI-first, AI-only mandates now have metrics attached. CTOs are reporting tokens consumed per engineer, agent invocation counts, autocomplete acceptance rates, and pull requests with an AI co-author tag. Boards take all of it as engineering productivity and nod along.

Through my entire career I have been incredibly opinionated about developer experience and productivity metrics. Frankly, all of these metrics are garbage. None of them measure the product the engineers are building. They measure how much the vendor billed the org last quarter, sorted by employee.

How Mandates Become Metrics

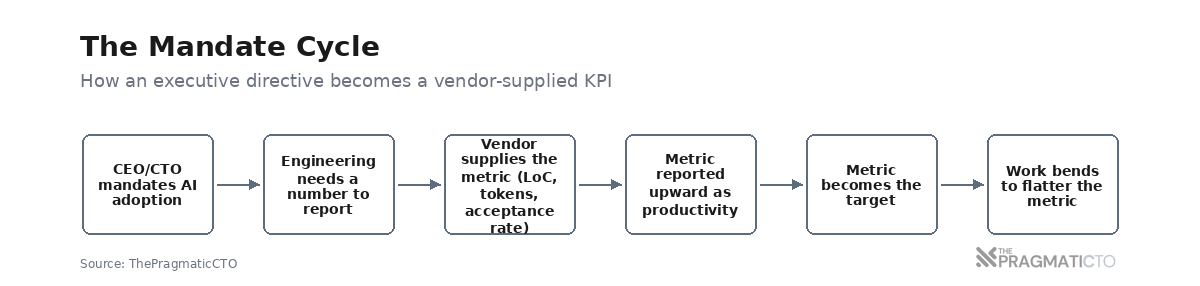

Here is the eternal corporate cycle: a CEO or CTO declares that the org will adopt X, which means engineering leadership now needs a number to report upward. The easiest number to report is whichever one the vendor already publishes on a dashboard. That number flows upward, gets adopted as a target, and by the next quarter the work has started bending to flatter it.

As an industry we have gone through this cycle so many times before, lines of code, velocity, story points, deploys per week, commits, etc. etc. etc. Every time we have collapsed a metric into a target, the metric has eventually rotted into theater, and what was once useful becomes purely performative.

Not a new idea, after all Goodhart's Law has been with us for over fifty years, and as Marilyn Strathern put it so well: "When a measure becomes a target, it ceases to be a good measure."

Software engineers are smart (at least if you are doing your hiring right) and they will eventually find a way to game the metric, doesn't matter what the metric is. What the industry is currently doing with tokens and lines of code is setting the wrong incentives, and creating massive problems down the line.

For example, lines of code made a comeback last year as part of the AI-first push, the results are predictable: GitClear measured an 8x increase in duplicated code blocks during 2024. The cheapest way to look productive on the metric is now to ship more code, regardless of whether the code needed to exist.

Of course, more lines of code means more bugs, more maintenance, more complexity, and more risk; none of these things are accounted for in the metric or tracked, or factored into the cost of the code.

Tracking token usage has the same deficiencies as lines of code, and the same problems down the line; but is also objectively worse, because you are tracking a metric that your vendor cares about, but is meaningless to the org, to your customers, and to your business.

Tracking tokens is the equivalent of reporting on AWS spend, and equating a larger bill with higher productivity; the problem is that the bill is not a measure of productivity, it is a measure of consumption, just like tokens are.

The Vendor Narrative

So you might be thinking, "Ok, if all these metrics are garbage, why are all these C-level executives mandating them?", "Why is it getting this much adoption, across so many orgs?"

Well, there's a reason for this, and it starts with vendor biases and incentives; let's take a look at the Anthropic 2026 Agentic Coding Trends Report.

One of the little nuggets in the report is: "In 2026, the value of an engineer's contributions shifts to system architecture design, agent coordination, quality evaluation, and strategic problem decomposition. The primary human role in building software is orchestrating AI agents that write code."

Anthropic sells the harness that's replacing the implementer role, and the report is telling CTOs the implementer role is going away. Call me crazy, but there is a bit of conflict of interest here.

Now, the incentive for Anthropic is pretty clear; orchestrators consume more tokens than implementers. An engineer typing code uses one autocomplete at a time, while an engineer running four agents in parallel — reviewing, decomposing, evaluating, coordinating — burns through tokens at a different order of magnitude.

More consumption, more revenue.

This is really a marketing play. Look at Cursor, Cognition's Devin, GitHub's Copilot Workspace, Augment Code — every productivity narrative aligns with consumption-based pricing in a way that should make a CTO suspicious of the claims.

FOMO is a powerful motivator, and the vendor narratives have been extremely effective at selling a future that is not yet here. Now, I'm not denying that these tools are useful, can be force multipliers for experienced engineers, and can help teams get more done in less time. But they are not the panacea that some are making them out to be; nor are they good enough to justify these overarching mandates in the way we are seeing them adopted today.

And if you don't believe me, just look at Microsoft. In June 2025, Microsoft's Julia Liuson, the President of the Developer Division, told her managers in an internal memo that "AI is now a fundamental part of how we work… using AI is no longer optional — it's core to every role and every level."

So far these AI-first mandates and tokens-as-metrics have ended up backfiring in spectacular fashion. Klarna spent 2024 telling investors AI was doing the work of 700 customer service agents, then admitted in May 2025 that "cost unfortunately seems to have been a too predominant evaluation factor… what you end up having is lower quality." They started rehiring humans. Customer service costs in Q3 2025 came in at $50 million, against the $60 million in claimed AI savings.

What Anthropic's Own Footnotes Say

There are a couple of interesting things in the report worth calling out:

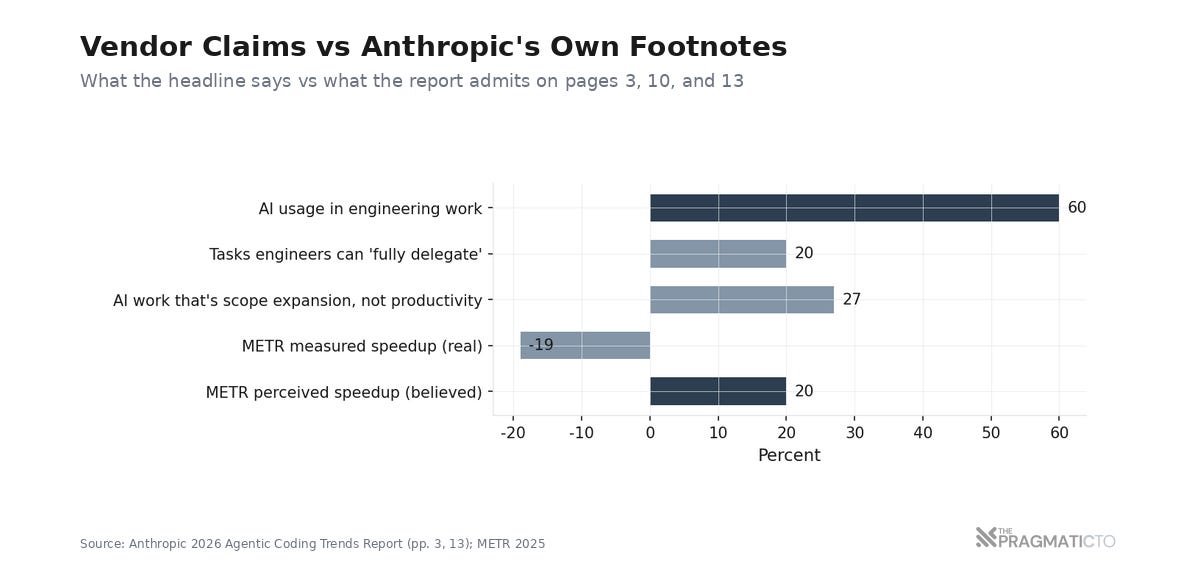

Page 3 of the Anthropic report, in the foreword: "developers use AI in roughly 60% of their work, they report being able to 'fully delegate' only 0-20% of tasks." Because of the hype around agentic coding, we have companies making decisions on the first number, and not the second. The second number is the one that should govern hiring, headcount, and architecture decisions.

Page 13 has a second admission, also worth quoting verbatim: "Notably, about 27% of AI-assisted work consists of tasks that wouldn't have been done otherwise: scaling projects, building nice-to-have tools like interactive dashboards, and exploratory work that wouldn't be cost-effective if done manually." As much as I hate to admit it, internal tooling and exploratory work is often less critical and sometimes optional; not directly contributing to the bottom line.

Four pages later the report walks the orchestrator framing back, in language that doesn't read as a walk-back unless you're looking for it. Page 10: "engineers report being able to 'fully delegate' only a small fraction of their tasks. The apparent contradiction resolves when you understand that effective AI collaboration requires active human participation."

There is enough independent data to support that claim, for example, METR's 2025 randomized trial of 16 experienced open-source developers measured a 19% slowdown when AI tools were used. The developers in that same study believed they were 20% faster.

Faros AI's telemetry on 10,000 developers across 1,255 teams shows the same shape at scale and with different methodology limits. PR review time on high-AI-adoption teams went up 91%. Average PR size went up 154%. Bugs per developer went up 9%.

Anthropic's report is honest about all of this if you read past the foreword. The trouble is that very few CTOs are reading past the foreword, and they are falling prey to the marketing and FOMO; in turn, the rest of the C-suite is reading a slide deck that summarizes the foreword and only compounding the problem.

What to Do Instead

Critique without an alternative is just complaint, and believe it or not I am trying to be constructive here. So what should you do instead of tracking tokens, lines of code, and mandating every engineer to use an agent?

I do not have a perfect answer yet, but a few things have become pretty clear from working with my own teams and from the telemetry already cited above. None of them require a token target, an adoption-rate dashboard, or any other vendor-supplied metric to operate.

The real bottleneck is review capacity, not generation capacity.

The 91% blowup in PR review time on high-AI-adoption teams (Faros, cited above) is not an inefficiency to optimize away; it is the new constraint your engineering org is operating against. AI-generated code is cheap. The human ability to evaluate that code is not.

An engineer in Anthropic's own report puts the underlying skill in one sentence: "I'm primarily using AI in cases where I know what the answer should be or should look like." This means we have to account for the fact that the work an agent generates is not done until a human can sign their name to it.

There are a few ways to tackle the review problem, personally I push for smaller PRs, incremental changes and keeping context human readable. In contrast, tracking regressions and defect rate will tell you if the team is rubber-stamping AI-generated PRs because the queue is too long.

Replace mandates with structured exploration time.

The orgs that have found durable wins with agentic coding are not the ones with the highest adoption rates or the biggest token budgets. They are the ones that gave teams permission to experiment, expected some pilots to fail, and reported findings honestly without putting consumption numbers on a leadership slide.

Drew Breunig's 10 Lessons for Agentic Coding puts it well: "when code is cheap, implement to learn" — teams need room to discover what agents are actually good at on their stack, in their codebase, against their constraints. A quarterly token target is the opposite of permission; it is a coercion budget with a vendor-supplied number sitting on top of it.

Measure ownership, not consumption.

If nobody on your team can explain a critical path the agent wrote, the org is carrying a liability that has not been priced into the maintenance budget yet. The right question to ask in a code review is not "how was this generated?" but "who can defend this in production at 3am?" Track that number across the team. It is much harder to game than tokens consumed, and it is a much better proxy for whether AI-generated code is adding to the codebase or detracting value from it.

One phrase that Drew uses in the article is: "Agentic code is free as in puppies." The implementation is cheap. Ownership and ongoing maintenance are not, and the cost shows up two quarters later when something breaks and nobody on the team can say why.

What I'm Doing

Agentic coding has made code "cheaper". The cheapness is borrowed from a vendor whose pricing, model weights, and output quality you do not control, and not all of the code being produced this way is equally durable on the other end of a vendor-side change. Anthropic or OpenAI can finetune compute overnight, lower the quality of a model you depended on yesterday, and put your pipeline at risk before you finish your morning standup. That risk goes on the same balance sheet as the vendor lock-in I described earlier.

Code quality is still the engineering function's responsibility, and ultimately yours and mine as CTOs. Agents change how the work gets done; they do not change who signs their name to it. As good as agentic coding is — and it keeps getting better — I am not willing to let it run free without supervision.

Design, architecture, and final review are the parts of the process that have to stay human, because those are the parts where the org's exposure to liability, downtime, and competitive disadvantage is most concentrated.

The two side projects I'm building right now — Structpr.dev and Shiplog.ca — are the testbed. I'm using both to experiment with the workflow. Some days the agent saves me hours; other days it generates work I have to throw away wholesale. The question I keep poking at is where the human input on design direction earns its keep, and so far the honest answer is: in more places than I expected. None of this is a finished playbook. It is the slow work of figuring out, on my own code, where the line between human and agent should sit. I'll write up what I learn when there's a real pattern to share. There isn't one yet.

Questions for Your Next Board Meeting

When your board asks for AI adoption metrics, what number are you giving them — and what is that number measuring once you strip the vendor framing off it?

If your agentic coding budget tripled tomorrow, would the work get better or just larger?

Who on your team can explain the critical paths in your codebase from memory? Is that number going up or down?

The vendor's report tells you engineers are becoming orchestrators. Whose business model does that framing serve?

Mandates make the dashboard look better. Nothing else.