What to Measure When the CEO Asks for Engineering Metrics

How to make sure you are measuring the right things

Every engineering leader gets this email. "The board wants engineering metrics for next quarter's deck; can you put something together?" Twelve words that launch a thousand bad dashboards.

We have all been there. The instinct is to grab whatever is closest---DORA metrics, sprint velocity, maybe a cycle time chart, or even worse LoC (lines of code)---and arrange them on a slide that looks like you've been tracking this all along. The board nods. The CEO nods. Then someone asks a follow-up question, and you spend the next six months defending numbers you picked in an afternoon.

The problem isn't that you chose the wrong metrics(unless you picked LoC). The problem is that "give me some metrics" is the wrong ask, the wrong conversation and will eventually lead to disaster. If we are to take the question "How do you measure engineering performance?" seriously, then we need to understand that it's four different questions masking as one, and most CTOs answer whichever one they find easiest rather than the one being asked.

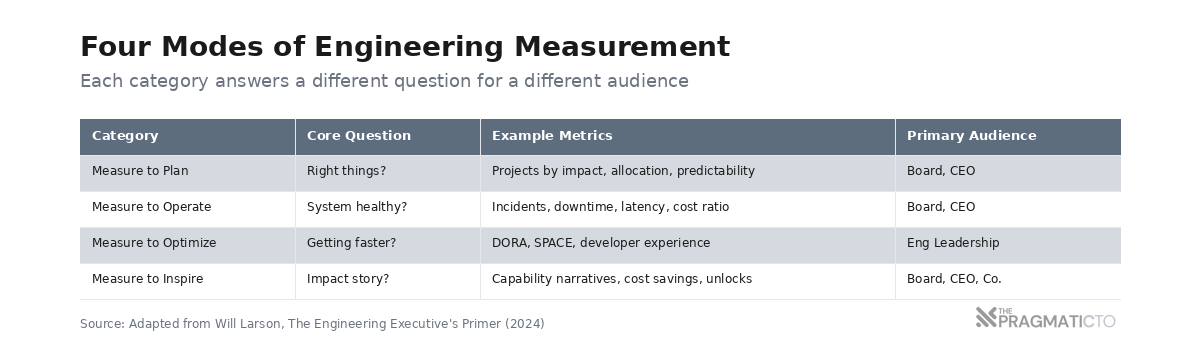

Will Larson put it plainly in *The Engineering Executive's Primer*: "There is no one solution to engineering measurement, rather there are many modes of engineering measurement, each of which is appropriate for a given scenario" Four modes. Four questions. Getting the right answer starts with figuring out which one you're being asked.

One Question, Four Answers

Larson's framework splits engineering measurement into four categories, each answering a different question. They aren't interchangeable. Picking the wrong one for your audience is worse than picking no metrics at all.

Measure to Plan. Are we working on the right things? Track shipped projects by team and their impact on the business. This is the language boards speak natively---investment and return, allocation and outcome. If you can show that 60% of engineering effort went to features that moved revenue and 15% went to infrastructure that prevented last quarter's outage from recurring, you've answered the planning question. Most boards don't need more than this.

Measure to Operate. Is the system healthy right now? Incidents, downtime, latency, engineering costs normalized against business metrics. Operations metrics answer a question that sounds mundane but matters more than anything on your roadmap: should you be following your plan or swarming to fix a critical problem? A CEO who sees three major incidents in a quarter understands why the feature roadmap slipped; a CEO who sees a missed roadmap with no context assumes engineering is slow.

Measure to Optimize. Are we getting faster or slower? This is DORA's domain---deployment frequency, lead time, change failure rate, recovery time---and SPACE territory too (satisfaction, performance, activity, communication, efficiency). Both are diagnostic: useful for your engineering leads diagnosing bottlenecks, useless in front of your board. The problem is translation. A non-engineer who hears "our deployment frequency increased 40%" assumes that means more value; bridging that gap requires technical context a quarterly meeting doesn't provide.

Measure to Inspire. What's the story of engineering's impact? Most CTOs skip this category---which in my opinion is a mistake, because inspiration metrics are the narratives that change how the organization thinks about engineering. Not dashboards. Stories: the migration that cut infrastructure costs 40%, the platform rebuild that compressed a six-week feature into two days, the reliability work that turned a churning enterprise customer into a case study. When the board hears those, engineering stops looking like a cost center and starts looking like the reason the company can do things competitors can't.

Now, if you are paying attention so far, you might noticed that I mentioned to mention two things, metrics are not interchangeable and each category has an audience.

Most CTOs set themselves up for failure by selecting the wrong category for the wrong audience. What your board wants, vs what your engineering leads need, vs what your company needs are all different. Inspiration metrics are the hardest to build and the easiest to skip; they're also what gets you headcount next year.

Five Ways to Destroy Trust With Your Dashboard

Knowing what to measure is half the problem. The other half is knowing how measurement goes wrong---and it believe me it will go wrong in pretty predictable ways. Here are some:

1. Goodhart's Law, now with infinite leverage. "When a measure becomes a target, it ceases to be a good measure." Charles Goodhart wrote that in 1975; the software industry has spent fifty years proving him right. Story point inflation. Deployment frequency gaming. Bug counts manipulated by closing duplicates. Every metric that touches a performance review gets optimized for the metric, not the outcome (developers are smart and they will find a way to game the system to their advantage).

AI has the potential to make this worse. When generating code costs nothing, code volume metrics become meaningless and a developer can mass-produce pull requests, PR count stops correlating with value delivered. Goodhart's Law had a natural ceiling when humans were the bottleneck; remove the bottleneck and the gaming potential is unlimited.

2.Measuring individuals instead of teams. Dan North put it precisely: "Attempting to measure the individual contribution of a person is like trying to measure the individual contribution of a piston in an engine---the question itself makes no sense." Software is a team activity. The developer who mentors three juniors ships less code and creates more value than the one who heads-down grinds features. Individual metrics can't capture that; they punish it (and if you are a CTO, you are the one who is responsible for the team's success).

McKinsey learned this the hard way. They tried to measure "individual developer productivity" in 2023 and the response was brutal---Kent Beck, Gergely Orosz, and Dan North all piled on. Beck's line was the sharpest: measuring developer productivity by coding time is "like measuring surgeon productivity on what percentage of their time they were cutting with a scalpel---and ignoring whether the patient got better." It became the most popular Pragmatic Engineer article of 2024. Individual contribution analysis doesn't just fail as a metric; it poisons the team. You get adversarial dynamics, eroded trust, and people optimizing for the wrong things.

3. The measurement loop. Stakeholders keep asking for more metrics---different cuts, new dashboards, one more data point---and nothing you build satisfies them. I've been in this loop. It's not a metrics problem. It's a trust deficit wearing a metrics costume. No dashboard fixes a broken relationship between engineering and the business; if you're caught in this cycle, put the dashboard down and have the hard conversation about what's actually wrong. Larson says it plainly: the loop is a signal, not something you solve with more data.

4. Optimization metrics in the wrong room. Cycle time, deployment frequency, change failure rate---these are diagnostic tools for engineering leaders, not performance indicators for the board. Put them in front of non-technical stakeholders and they get misread; a higher deployment frequency sounds good, but it says nothing about whether you shipped the right things. Worse, the board starts setting targets. "Can we get deployment frequency to daily?" Now you're optimizing for the metric instead of the outcome. Larson is blunt about this: CEO and board get planning and operations metrics. Full stop.

5. Perfection paralysis. The opposite failure mode. Some CTOs refuse to measure anything until they have the perfect framework, the perfect instrumentation, the perfect dashboard. They read about DORA, SPACE, DX Core 4, DevEx; they evaluate engineering intelligence platforms from LinearB, Jellyfish, Swarmia, Cortex; they attend conferences and take notes. And they measure nothing while they decide.

My advice? Start with something imperfect. Larson's sequencing advice? measure easy things first to build trust with stakeholders, even if the data isn't precise. Only take on one new measurement task at a time.

The Ghosts in the Machine

As if things weren't complicated enough, the AI era has added a new layer of complexity. Having metrics that measure what you think they measure was already hard, now AI is making it even harder.

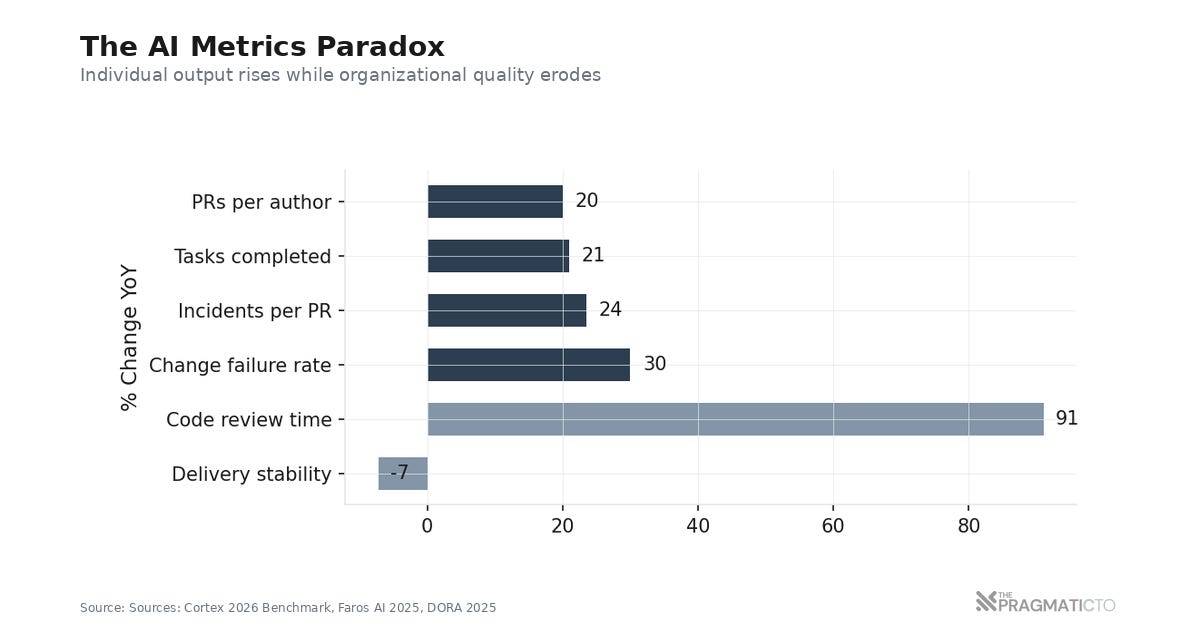

The 2025 DORA report found that a 25% increase in AI adoption correlates with a 1.5% drop in delivery throughput and a 7.2% drop in delivery stability.

The individual-level numbers tell a different story. Cortex's 2026 benchmark shows PRs per author up 20% year-over-year. Sounds like progress. But incidents per PR increased 23.5%; change failure rates climbed roughly 30%. More output, more breakage. The Faros AI report shows the same pattern at larger scale: tasks completed up 21%, PRs merged up 98%, but code review time increased 91% and PR size grew 154%. In a way AI is starting to clog the pipeline; and bring down overall quality.

Every traditional metric is now suspect. Deployment frequency goes up because AI generates more deployable units; Plandek's right that "more deployments aren't always a sign of progress." Lead time shrinks---but only for the coding phase. Review, testing, approval? At best same as before, at worst taking much longer. Change failure rate looks flat until you remember that volume is up; a flat rate on higher volume means more absolute failures. Recovery time is the ugliest one: developers stall because they're debugging code they didn't write and don't fully understand.

DORA added a fifth metric in 2025. Rework rate---unplanned follow-up deployments caused by production issues. It exists because the original four metrics miss something important: the cost of fixing what you just shipped. You can have perfect deployment frequency and still be drowning in rework.

Does this mean that metrics are done, the answer is no. But you need to read your metrics with more skepticism now, which means pairing every speed metric with a quality metric, breaking lead time down by stage rather than treating it as a single number, and watching rework rate as your earliest warning signal. A dashboard that only shows throughput is measuring the accelerator without looking at the road.

Where to Start

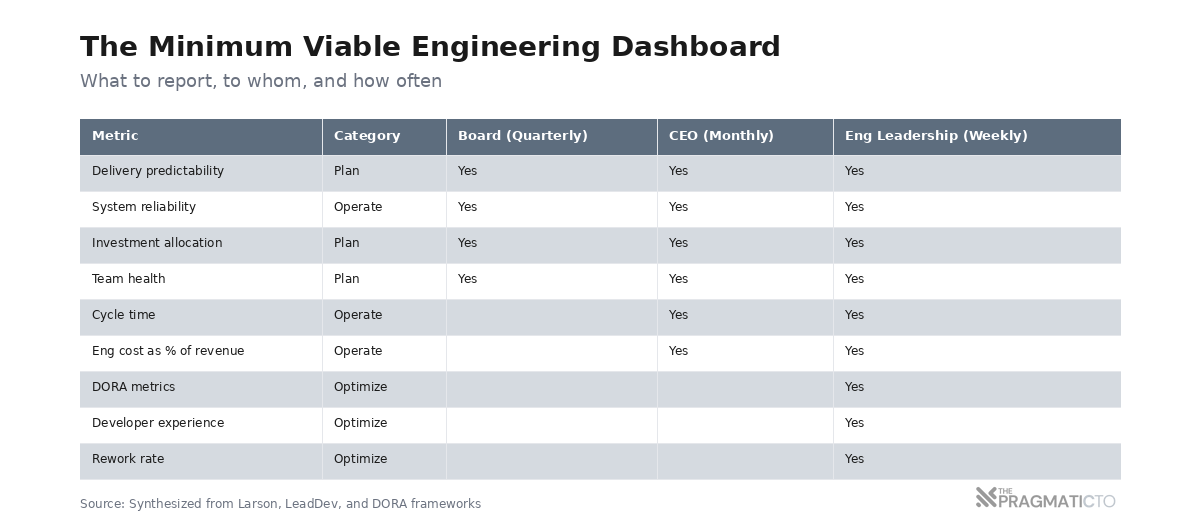

Frameworks can be useful, here is a starting point; adapt it for your context, your stage, your stakeholders.

Delivery predictability. Did we ship what we said we would? This is the metric that builds or destroys credibility with the board. Not "how much did we ship" but "did we hit our commitments?" Track it as a percentage; trend it over quarters. When the number drops, come prepared with a root cause and a plan.

System reliability. Incidents, uptime, recovery time. Boards understand reliability intuitively---the system works, or it doesn't. Pair incident count with recovery time; a team that has five incidents but recovers in minutes is in better shape than one that has two incidents and takes days to resolve them.

Investment allocation. Where did engineering effort go? New features, maintenance, unplanned work, technical debt---this is how the board decides whether engineering is pulling in the right direction. Swarmia benchmarks it (roughly 60% new features, 15% productivity improvements, 10% keeping the lights on), but your numbers will look different and should; the point isn't hitting their targets, it's knowing your own and explaining the reasoning behind them.

Team health. Attrition, hiring pipeline, engagement scores. The leading indicator that nobody reports until it's too late. A team losing senior engineers will show up in your delivery metrics six months from now; by then the damage is done. Report this proactively.

Three principles hold no matter which category. Only report metrics you're already tracking---the moment you build a separate collection for the board, you're maintaining two systems and trusting neither. Show trends, not snapshots; one quarter is noise, four quarters is signal. And never show a speed metric alone. Deployment frequency without change failure rate beside it is a lie of omission; cycle time without reliability is the same trick. If you are not already doing this, you are setting yourself up for failure.

Swarmia's advice captures the right mindset: think of metrics like a thermometer. They're the outcome of good practices, not a target to chase.

As a recap, here are some questions to ask yourself:

When the CEO asks for engineering metrics, which of the four categories are they asking about---and are you answering that question or the one you're most comfortable with?

How many of your current metrics would survive Goodhart's Law? If your team optimized for nothing but hitting those numbers, would the outcomes improve or decay?

What story is your dashboard telling? Is it the story your engineering team would tell, or a performance your engineering team has learned to put on?

If you stripped away every metric that measures activity rather than outcomes, what would be left?

Peter Drucker never said "what gets measured gets managed." What he said was closer to the opposite: "Because knowledge work cannot be measured the way manual work can, one cannot tell a knowledge worker in a few simple words whether he is doing the right job and how well he is doing it." Tom DeMarco, who famously wrote "you can't control what you can't measure," retracted it in 2009: "My answers are no, no, and no."

Measurement isn't the goal. Understanding is. The metrics are supposed to help you make better decisions about your teams, your systems, and your strategy. If they're not doing that---if they're creating theater instead of insight---the problem isn't that you need better metrics. The problem is that you've confused the dashboard for the thing it's supposed to represent.